Destroy your Resource with Terraform destroy command.įork my repo for more details, all code is present in my Github project link as mentioned above. Access sample app using Load Balancer DNS Nameġ4.

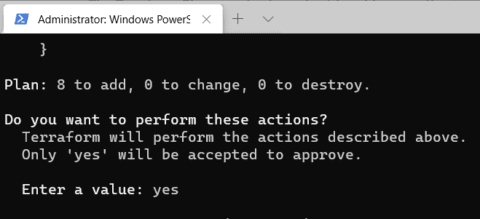

Verify Load Balancer Instances are healthyĤ. Now Run Terraform Init, Terraform validate, Terraform plan, and Terraform apply.ġ3. with the help of the provisioner we will copy the file from the local(the private key file you copied) to the Bastion host with file provisioner, Run some commands on the remote host through Remote-provisioner.ġ1. 3 Security Groups (bastion host, Private Ec2, and Load balancer)ġ0. Create a data source file so that it can dynamically fetch data related to the latest ami in AWS.Ĭ10–01-ELB-classic-loadbalancer-vari ables.tfĬ10– 03-ELB-classic-loadbalancer-outputs.tfĩ. We will attach an EIP for the bastion host so that we can have a static IP.ħ. Module link- /modules/terraform-aws-modules/ec2-instance/aws/latestĦ. But Terrafrom script ask the question 'Are you sure you want to continue connecting (yes/no)' and i am not able to pass the answer 'yes' to it. Create EC2 instances :-1 Bastion host and 2 private EC2 instances in 2 AZs. I am trying to connect to private ec2 instance through Bastion server using Terrafrom. In GitHub refer c4–01- vpc-variables.tf contains variablesĬ4–03-vpc-outputs.tf →all outputs related to VPC.ĥ. Create VPC for that I have used Modules:-ġ VPC, 6 subnets (Public, Private, Database),1 Nat gateway in Public Subnet, 1 Internet Gateway attached to VPC. Note for every resource I have created a variable.tf, output.

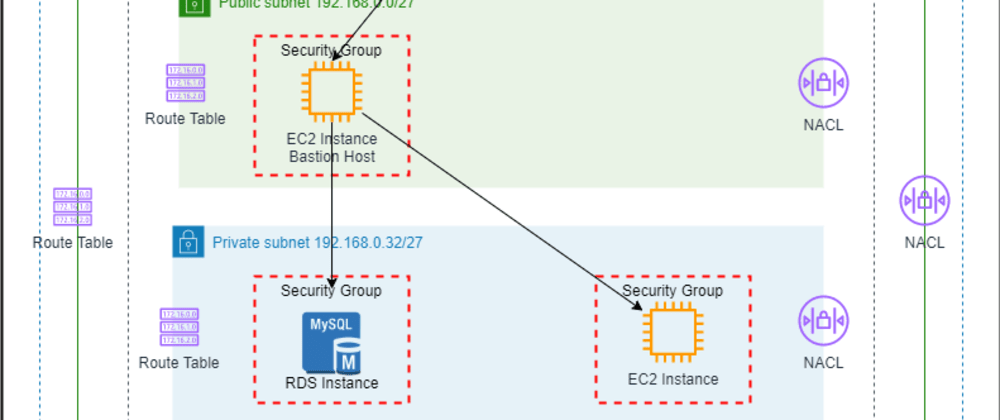

Create a bash script for the installation of Httpd. I need to provide a keypair that can be used to launch the EC2 template, but the bucket ( awss3bucket.bucket) that needs to contain the public key of the key. I am getting stuck on the bastionhostkeypair field. I am using the Terraform module provided by Guimove. Create a Terraform settings block that includes provider and terraform version details.ģ. I am trying to spin-up an AWS bastion host on AWS EC2. Install Terraform CLI on your local system and configure AWS credentials with AWS configure command.Ģ. With the help of provisioner, we will copy the file from local(private key file you copied) to the Bastion host, Run some commands on remote host.ġ. Github link:- /piya199616/Terraform-aws-project.ġ VPC ,6 subnets (public, Private, Database) ,1 Nat gateway in Public Subnet, 1 Internet Gateway attached to VPC.ġ Bastion host and 2 private EC2 instances in 2 AZs.ģ Security Groups (bastion host, Private Ec2, and Load balancer) "kubernetes.io/cluster/$" > ~/.Today we will be deploying a 3 tier Application using Terraform in AWS.ĪWS services: EC2 Instances, VPC, Nat Gateway, Internet Gateway, security groups, Classic Load balancer. Public_subnets = local.public_subnet_cidr You can use bastion hosts to create SSH connections to compute instances that dont have public IP. Private_subnets = local.private_subnet_cidr Generate an SSH Key Pair to Access Private Instances. Private_subnet_cidr = Īzs = data.aws_availability_ Port 3389 (RDP) to access Windows worker nodes. Security group access between bastion host and worker nodes in the Amazon EKS cluster. kubectl is installed on the bastion host and has access to the EKS cluster. It's entirely possible this is fully a networking problem and not an EKS/Terraform problem as my understanding of how the VPC and its security groups fit into this picture is not entirely mature. A bastion host that can be used for troubleshooting and is accessible from your workstation.I am able to SSH into the bastion via my static IP (that's the var.company_vpn_ips in the code).The Bastion configuration works in the sense that the user data runs successfully and installs kubectl and the kubeconfig file.Previously when I had cluster_endpoint_public_access set to false the terraform apply command would not even complete as it could not access the /healthz endpoint on the cluster. I have (for the time being) switched the cluster to a public cluster for testing purposes.Here are the facts/things I know to be true: The problems that I am encountering is that after SSH-ing into the bastion, I cannot ping the cluster and any commands like kubectl get pods timeout after about 60 seconds. To accomplish this, I've modified the Terraform EKS example slightly (code at bottom of the question). SSH users are managed by their public key, simply drop the SSH key of the user in the /public-keys path of the bucket. All SSH commands are logged on an S3 bucket for security compliance, in the /logs path. Resources (deployments, cron jobs, etc) configurable via the Terraform Kubernetes module Features This module will create an SSH bastion to securely connect in SSH to your private instances.I am working on a Terraform project that has an end goal of an EKS cluster with the following properties:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed